When Hidden Scents was written, back in 2015, it was certain that we wouldn't see an artificial smelling machine akin to the state of visual object recognition at the time, until we created robots that were indistinguishable from humans -- robots that grew up like a child did, complete with it's own physiological and emotional response to its environment, especially its social environment, and of course with its own resulting autobiography.

Not until then would a robot, or even a simple computing device, be able to perform anything even close to the phenomenon of olfactory experience. And that's mostly because every one of us smells things differently, whether its genetics inherited before birth, anosmic dysfunction from viral infections, olfactory desensitization from old age, or just difference in cultural or personal preference. Without all this mess, no matter how hard you try to replicate the process of olfaction, you can't really call it the same thing as smelling unless you have an authentic adult human in the equation.

Granted there are reverse engineered electronic noses implanted on the bodies of roboticized locust cyborgs that have been programmed to detect explosives. Or less sci-fi e-noses used to detect fake whiskey. But that's not what we're talking about here.

The headlines pasted below show us the dawn of the baby-bots, robots started from scratch, to understand themselves, and maybe even to accumulate an autobiographical identity.

Researchers trained an AI model to 'think' like a baby, and it suddenly excelled

Jul 2022, phys.org

The exciting finding by Piloto and colleagues is that a deep-learning AI system modeled on what babies do, outperforms a system that begins with a blank slate and tries to learn based on experience alone.

via Princeton University: Luis S. Piloto et al, Intuitive physics learning in a deep-learning model inspired by developmental psychology, Nature Human Behaviour (2022). DOI: 10.1038/s41562-022-01394-8

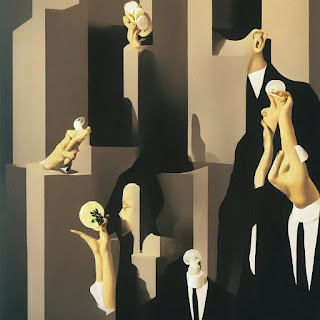

Image credit: AI Art - Fragrance Advert by Magritte - 2022: portrait fragrance advertising campaign by magritte [link]

Engineers build a robot that learns to understand itself, rather than the world around it

Jul 2022, phys.org

Robot able to learn a model of its entire body from scratch, without any human assistance by creating a kinematic model of itself, and then using its self-model to plan motion, reach goals, and avoid obstacles in a variety of situations. It even automatically recognized and then compensated for damage to its body.The researchers placed a robotic arm inside a circle of five streaming video cameras. The robot watched itself through the cameras as it undulated freely. After about three hours, the robot stopped. Its internal deep neural network had finished learning the relationship between the robot's motor actions and the volume it occupied in its environment.

Yeah I'm creeped out.

via Columbia University School of Engineering and Applied Science: Boyuan Chen, Fully body visual self-modeling of robot morphologies, Science Robotics (2022). DOI: 10.1126/scirobotics.abn1944.

No comments:

Post a Comment