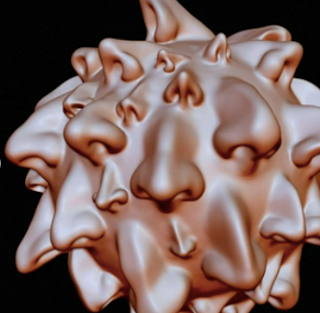

AKA Alpha Nose

Submitted to biorxiv's preprint server in September/December 2022, and published in Science September 2023, it's the first model to out-smell regular humans. If you think your sentient sovereignty is threated by a computer than can draw a picture, then it's probably time for you to get some benzodiazepines.

You give this thing a molecule and it will tell you what it msells like. More specifically, if you type into a computer the name of a chemical, it will give you words that describe the way that chemical smells, and it will be better at doing it than a human.

Ray Kurzweil smirks. (Because it's not 2030 yet.)

The language of smell has been a tricky thing for a long time. It became pretty obvious just how tricky when we all woke up one day to realize that you can't google smells. And then, by extension, we realized that the Internet doesn't smell, and something must be wrong, because if it's not on the internet, then it doesn't exist.

Attempts were made to correct this. The DREAM dataset, sometimes referred to as Keller 2017, sometimes as the Rockefeller study, was the first to use the power of machine learning to crunch chemoinformatics and natural language into a prediction machine for speaking in smells. But even they had some problems, and were not able to score better than humans. Only five years later, and it's done (with the help of the Google Brain, of course).

Today, the Internet can smell.

Introductory Remarks:

- “In olfaction, no reliable instrumental method of measuring odor perception exists, and trained human sensory panels are the gold standard for odor characterization.” (17)

- “The model is as reliable as a human in describing odor quality: on a prospective validation set of 400 novel odorants, the model-generated odor profile more closely matched the trained panel mean than did the median panelist.”

- The model "performs roughly on par with the median human panelist, beating a chemoinformatic baseline."

- "The model is as reliable as a human in describing odor quality"

Methods:

- "To generate odor-relevant representations of molecules, we constructed a Message Passing Neural Network, a specific type of graph neural network, to map chemical structures to odor percepts. Each molecule is represented as a graph, with each atom described by its valence, degree, hydrogen count, hybridization, formal charge, and atomic number. Each bond is described by its degree, aromaticity, and whether it is in a ring. Unlike traditional fingerprinting techniques, which assign equal weight to all molecular fragments within a set bond radius, a GNN can optimize fragment weights for odor-specific applications."

- "To train the model, we curated a reference dataset of approximately 5000 molecules, each described by multiple odor labels (e.g. creamy, grassy), by combining the Goodscents and Leffingwell flavor and fragrance databases."

- Also, for novel odors, "We trained a cohort of subjects to describe their perception of odorants using the Rate-All-Tat-Apply method (RATA) and a 55-word odor lexicon."

Results:

- called a Principal Odor Map (POM)

- faithfully represents known perceptual hierarchies and distances

- extends to novel odorants

- is robust to discontinuities in structure-odor distances

- generalizes to other olfactory tasks.

Notes of Interest:

- The term "Odor Islands" is used when referring to certain globs of similar odors in odor space; just a cool term that was never able to exist before this model was created.

- Another term, "ground-truth" used while describing the model's ability to match novel odorants, "establish the ground-truth odor character for novel odorants." It's funny because the term "baseline" is corrupt in that it can sometimes refer to the previous chemoinformatics baselines, which are now inferior.

- On Musk: "When we disaggregate performance by odor label, the model is within the distribution of human raters for al labels except musk" (which they later explain as it having 5 structural classes, as opposed to garlic or fishy which have clear structural determinants like sulfur or amines; but also the "well-documented phenomenon" of genetic variability of perception to musk.

- On Familiarity: "[W]e see strong panelist-panel agreement for labels describing common food smells and weak agreements for labels like musk and hay."

- On Flavor and Fragrance vs Everyday Smells: The model is better for things that have lots of training data like fruity sweet floral, less so for the less so ("ozone, sharp, fermented").

- On Sulfur: Disaggregated by chemical class, sulfur-containing molecules showing strongest performance.

- On Why the Language of Smell is Hard for Humans: People guess the odor wrong (aka correlation to panel mean is low) because

1. genetic diversity for musk* (problems with the humans)

2. structural diversity like musk (problems with the chemoinformatics data)

3. unfamiliar like ozone (again problems with the humans**)

*I thought genetic diversity was also strong for anything with a specific anosmia like putrescene or trimethylamine, then again, they didn't test "bad" smells or what I call everyday smells; the traditional Dravnieks dataset is ultimately a legacy of the flavor and fragrance industry, so it weighs heavier on good smells vs bad.

**Although unfamiliarity is a reason for this type of identification-difficulty, it should be extended beyond the individual human to our society, or maybe a bit of the fragrance industry with a bit of academia. The semantic dataset, which I will call the RATAset for "rate-all-that-apply," which is like the opposite of a multiple choice, and great for naming smells, still only uses 55 terms taken from Goodscents and Leffingwell. I would be willing to bet that more people actually know what ozone smells like, for example, they just don't have the right language at hand for naming it.

- On Odorant Sample Contamination: The entire section on quality control is fascinating, and news to me. "Chemical materials are impure -- a fact too often unaccounted for in olfactory research. (24: M. Paoli, D. Münch, A. Haase, E. Skoulakis, L. Turin, C. G. Galizia, Minute Impurities Contribute Significantly to Olfactory Receptor Ligand Studies: Tales from Testing the Vibration Theory. eneuro. 4, ENEURO.0070–17.2017 (2017).)"

- Contamination, continued: Not only were there cases where the descriptions given by panelists seemingly inaccurate and later proven by GC/MS QC to be contaminated (so the panelists were right; their guess didn't match the molecule as named by the lab that sent the sample, but it did match the GCMS), but in some cases even the model got it "wrong," which implies that much of the training data is wrong, which means many of the samples of that particular chemical are likely to be contaminated. They only tested 50 of the 400 with this GCMS, but of the 50, they removed 26!

- Contaminated Vials vs Non-Contaminated Datasets: The datasets do have words like burnt, fishy, animal, musty, sour; but these are all words that can be used to describe good parts of flavors and fragrances ("slightly burnt" or "slightly fishy"). People don't use the word semen, ever; and you will almost never see that word written in regular discourse about olfaction or the language of smell, or even when talking about linden blossoms (go right ahead, try it for yourself); it's like we're literally not allowed to talk about it. Same with the word fecal or shit or etc. There is no "dirty sock," "cigarette butt," or "cat pee" in either the Goodscents or the Leffingwell datasets. Which leads us to this --

- They recommend characterizing the perceptual quality of contaminants.

- "[I]t is not safe to assume that the odor percept of a purchased chemical is due to the nominal compound." (And they add that non-flavor-and-fragrance chemical commodities are not incentivized to minimize contaminants.)

- Beyond the Perimeter of Ignorance: They created a potential odor space of 500,000 odorants "unknown to science or industry". And then then compute for us that it would take "70 person-years of continuous smelling time" to collect. (that's a lot of smelling time)

- Limitations: The model's main limitation is that it can predict the odors of only single molecules; in the real world of perfumes and stinky trash bags, smells are almost always olfactory medleys. “Mixture perception is the next frontier,” Mayhew says. The vast number of possible combinations makes predicting mixtures exponentially more difficult, but “the first step is understanding what each molecule smells like,” Meyer Rojas says. -Scientific American Dec 2023 Machine Learning Creates a Massive Map of Smelly Molecules https://www.scientificamerican.com/article/machine-learning-creates-a-massive-map-of-smelly-molecules/

Notes:

via Michigan State University Department of Food Science and Human Nutrition, University of Reading Department of Food and Nutritional Sciences, Google, and Monell Chemical Senses Center: A principal odor map unifies diverse tasks in olfactory perception. Brian Lee, Emily Mayhew, Joel Mainland. Science. 2023 Sep;381(6661):999-1006. doi: 10.1126/science.ade4401.

Preprint fulltext:

Formal citation:

Lee BK, Mayhew EJ, Sanchez-Lengeling B, Wei JN, Qian WW, Little KA, Andres M, Nguyen BB, Moloy T, Yasonik J, Parker JK, Gerkin RC, Mainland JD, Wiltschko AB. A principal odor map unifies diverse tasks in olfactory perception. Science. 2023 Sep;381(6661):999-1006. doi: 10.1126/science.ade4401. Epub 2023 Aug 31. PMID: 37651511.

Leffingwell Flavor-Base http://www.leffingwell.com/flavbase.htm

The Good Scents Company http://www.thegoodscentscompany.com

No comments:

Post a Comment